Turnitin has reported a rise in essays with high levels of AI-generated text, alongside early signs that students are using its in-product assistant mainly for feedback and guidance rather than pre-scripted prompting.

Data from the latest version of Turnitin's AI detection tool found that since October 2025, about 15 per cent of essay submissions contained more than 80 per cent AI-generated writing. Compared with results from its original AI detector, this is a marked increase in the share of highly AI-generated submissions.

The figures have reignited debate about how institutions set practical rules for AI use, and how staff can respond without relying solely on detection and enforcement. Turnitin is positioning transparency around the writing process as part of that response.

The company has also disclosed early usage patterns from Turnitin Clarity, a writing-transparency product introduced in July 2025. The tool tracks writing activity throughout an assignment and includes an optional AI assistant for students.

Clarity insights

Turnitin Clarity includes an AI assistant that educators can enable to provide guidance during drafting. Turnitin says it refuses to write the assignment for the student.

The product also checks for AI-generated writing and text copied from online sources and other student papers, and presents information intended to simplify assessment for educators.

In the first three months of Turnitin Clarity use, prompts were often framed as requests for evaluation and general feedback. Nearly a third of prompts reviewed across two months asked for "review, judgment, or other general feedback".

Turnitin cited examples such as "Is this good?", "Is this a strong conclusion?", and "What can I fix?" The pattern suggests many students are using the assistant as a rapid source of feedback while they write, rather than a tool for automated content generation.

Turnitin also reported that 94 per cent of students wrote their own prompts rather than selecting the tool's pre-written suggestions. The preset prompts include more structured requests linked to assignment criteria, such as asking for feedback aligned to a rubric element.

Prompting skills

Many student prompts lacked specificity or clear goals. Generic questions made up more than half of the prompts in the sample reviewed.

Only 36 per cent of prompts in the feedback category were considered effective. Turnitin assessed effectiveness based on whether prompts included details and context, clear goals or parameters, or showed an iterative learning process.

The data points to a growing need for instruction on how to ask precise questions when using AI systems in academic settings. Institutions have increasingly treated "prompting" as a literacy skill, alongside guidance on attribution, acceptable use, and critical evaluation of AI outputs.

Educator feedback

Educators using Turnitin Clarity reported time savings, saying the assistant handled frequent student questions on citations, style, and structure. This allowed staff to focus on higher-level teaching and assessment.

Educators also said the tool improved the consistency and speed of feedback during an assignment, linking this to students receiving immediate guidance rather than waiting for instructor availability.

Some said the assistant encouraged responsible AI use by offering a supervised way for students to engage with AI tools. Instructors reported that students appreciated being shown how to use AI responsibly rather than being told any AI use constitutes cheating.

The broader challenge for schools, colleges, and universities is how to handle AI in writing-heavy subjects where individual drafting and authorship are central to assessment. The reported increase in highly AI-generated submissions adds pressure on institutions to set policies that staff can apply consistently and that students can understand.

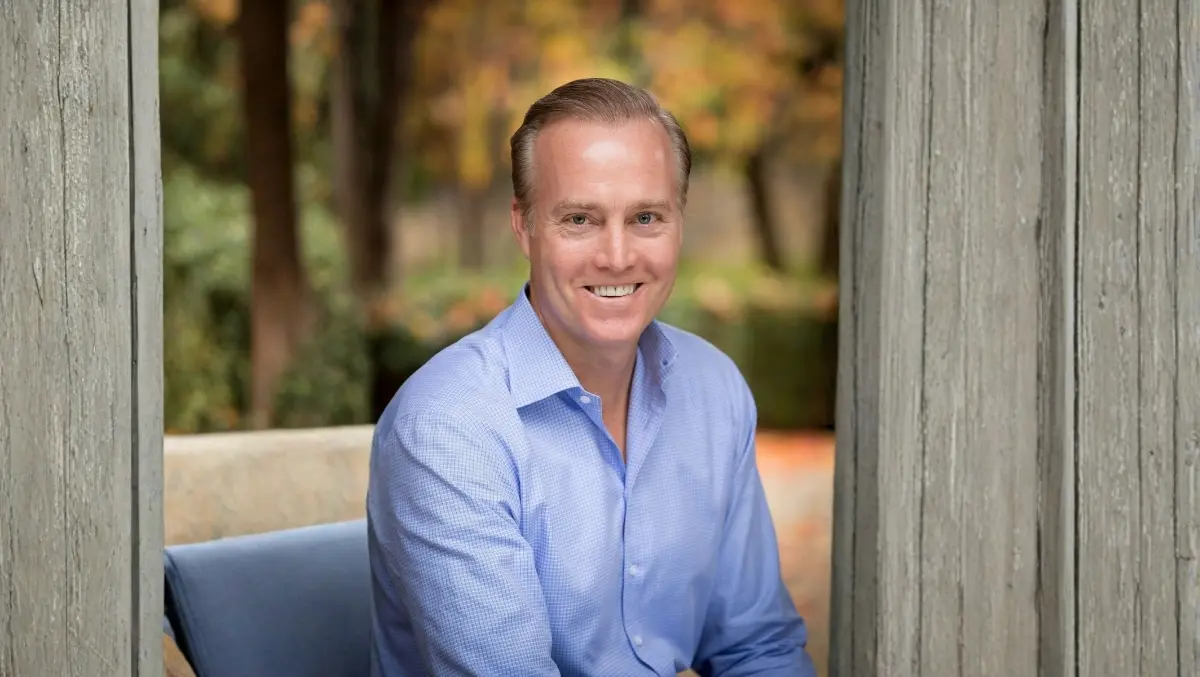

Chris Caren, Chief Executive Officer of Turnitin, said students and staff want clearer rules and more support during drafting.

"The reality is students and educators alike are craving clear guidance on when and how to use AI," Caren said.

He added: "Through our writing transparency tool Turnitin Clarity, we've gathered months of insights into how students are engaging with our AI assistant, and it's clear they are hungry for feedback during the writing process. With educators under increasing pressure to do more with less, we are dedicated to building solutions that make assessing the process and the final product easier for today's educators."

Turnitin said it will continue to develop its transparency tools and AI-related features as institutions formalise policies for AI use in coursework and assessment.