Privacy-first AI smart glasses run models on-device

Brilliant Labs, Neuphonic and TheStage AI have formed a strategic partnership to run vision and conversational AI on consumer devices rather than in the cloud.

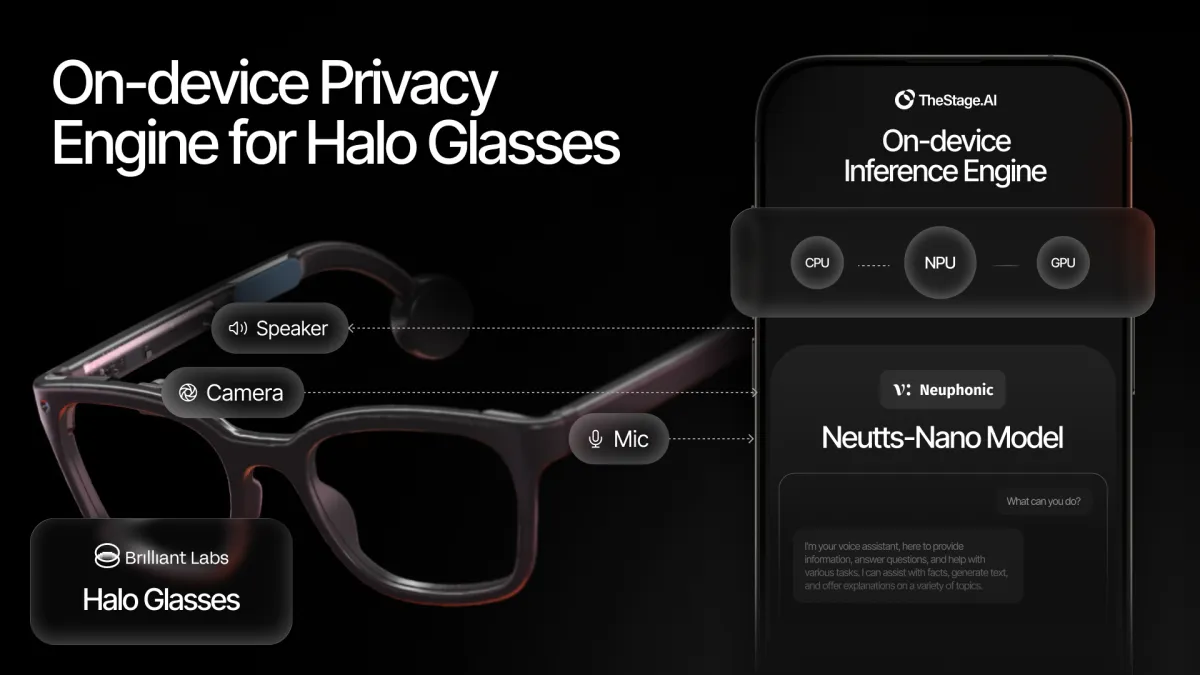

The approach keeps audio and visual data on a user's hardware and reduces the delays that come from sending requests to remote servers. The collaboration spans smart-glasses hardware, local speech models, and software that compiles and deploys models on a paired smartphone.

At the centre of the partnership is Halo, a new pair of smart glasses from Brilliant Labs. Halo will combine on-device vision inference with conversational AI that runs locally. The glasses are expected to cost USD $349 and ship in Q1 2026.

Edge processing

The partnership aims to shift inference workloads away from cloud infrastructure. In many consumer AI products, image analysis and speech processing run in remote data centres, requiring voice and visual data to travel across networks for processing.

In the proposed architecture, the system processes inputs locally and converts them into encrypted embeddings. As a result, raw point-of-view video, images and audio do not leave a user's phone or glasses, the companies said.

The work is split across three components. Brilliant Labs provides the eyewear platform and the on-device vision inference stack. Neuphonic supplies conversational AI models designed to run locally. TheStage AI contributes its ANNA optimisation engine, which compiles and deploys models to run on a paired smartphone using GPU and NPU accelerators.

TheStage AI says ANNA helps manage wearable performance constraints, including memory, latency and power consumption. As part of the partnership, it will optimise not only the core voice models but also supporting components such as transcription, wake word detection and diarisation.

Privacy positioning

The companies are pitching the partnership as an alternative to cloud-dependent approaches used by major consumer platforms, contrasting it with products that rely on remote inference for messaging, voice processing and image analysis.

They also pointed to concerns raised in public investigations about whether major platforms have met privacy commitments, arguing those concerns will grow as AI moves from text interfaces into devices with always-on microphones and cameras.

"We believe in a privacy-first future for personal computing. AI glasses are soon going to be everywhere around us: always-on cameras and microphones capturing our lives. That's either exciting or terrifying, depending on where that data lives and who is monetizing it," said Bobak Tavangar, CEO of Brilliant Labs and former Apple program lead.

Tavangar also tied the strategy to open-source development and independent scrutiny of how systems work.

Neuphonic is providing the voice interface for the glasses and companion phone setup. Its models are designed to run locally rather than through hosted APIs, which can introduce network delay and additional data handling.

"When you're having a conversation, speed and privacy are everything. You cannot wait for the cloud to think," said Sohaib Ahmad, CEO of Neuphonic.

TheStage AI describes its role as the layer that bridges hardware constraints and model execution, noting that running conversational AI in a wearable format requires careful optimisation across device resources.

"Great hardware and great models need a bridge, and that is what we build," said Kirill Solodskikh, CEO of TheStage AI.

Product features

Halo will include "context-aware, conversational AI" that can process what it sees and hears in real time. Brilliant Labs also describes a private memory feature that indexes what a user sees and hears for later recall and personalised context.

Another planned feature is Vibe Mode, described as a natural-language interface that generates custom AI mini-apps on demand, from AI agents to enterprise workflows.

The device will run a combined stack in which voice, vision and sensor data processing happens locally. Much of the computation is handled by the paired smartphone, with TheStage AI's tooling targeting execution on device accelerators.

"Running conversational AI on a pair of glasses is a massive computational challenge. You have to manage peak memory, latency, and power consumption to make responses feel immediate," said Solodskikh.